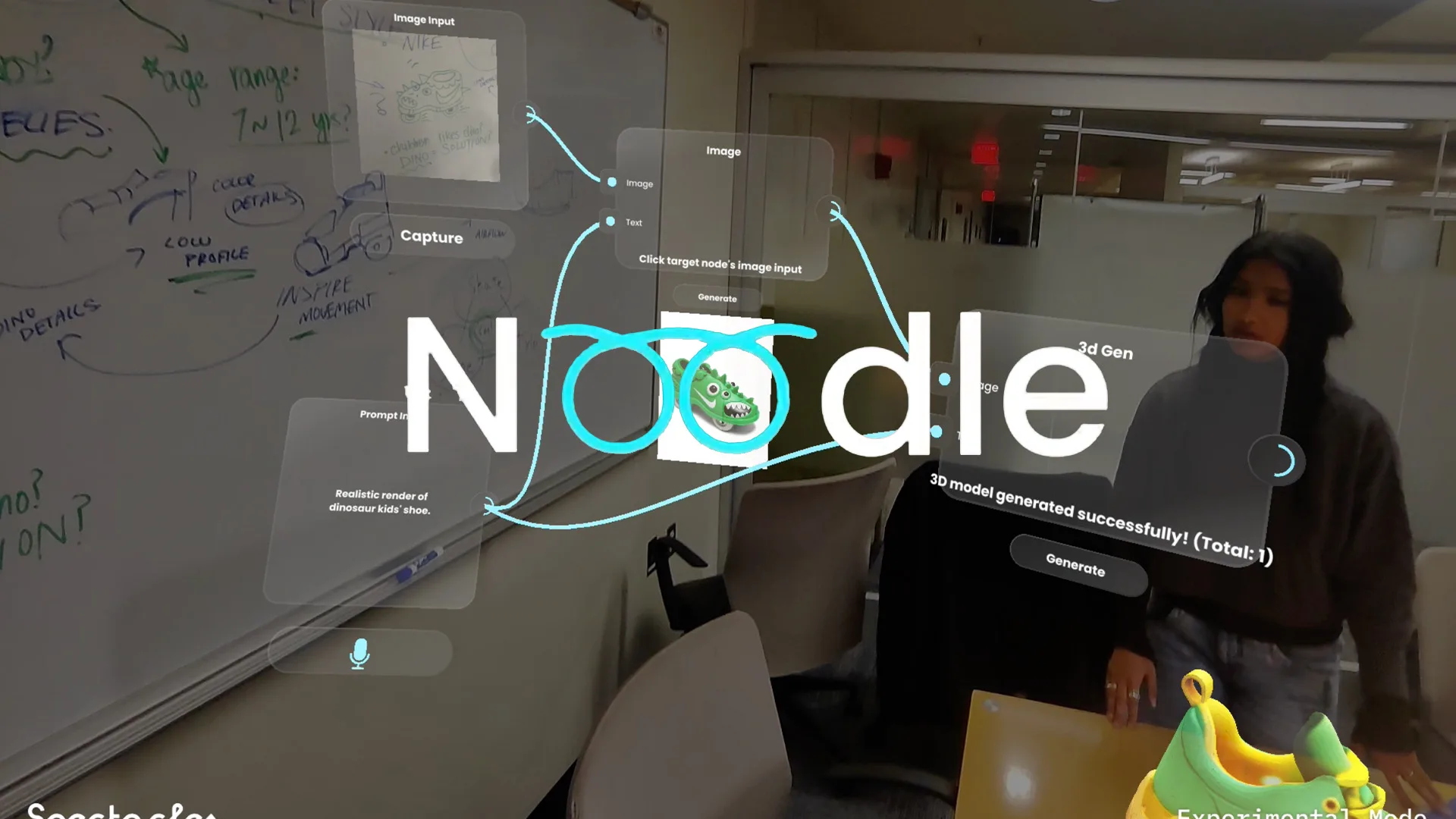

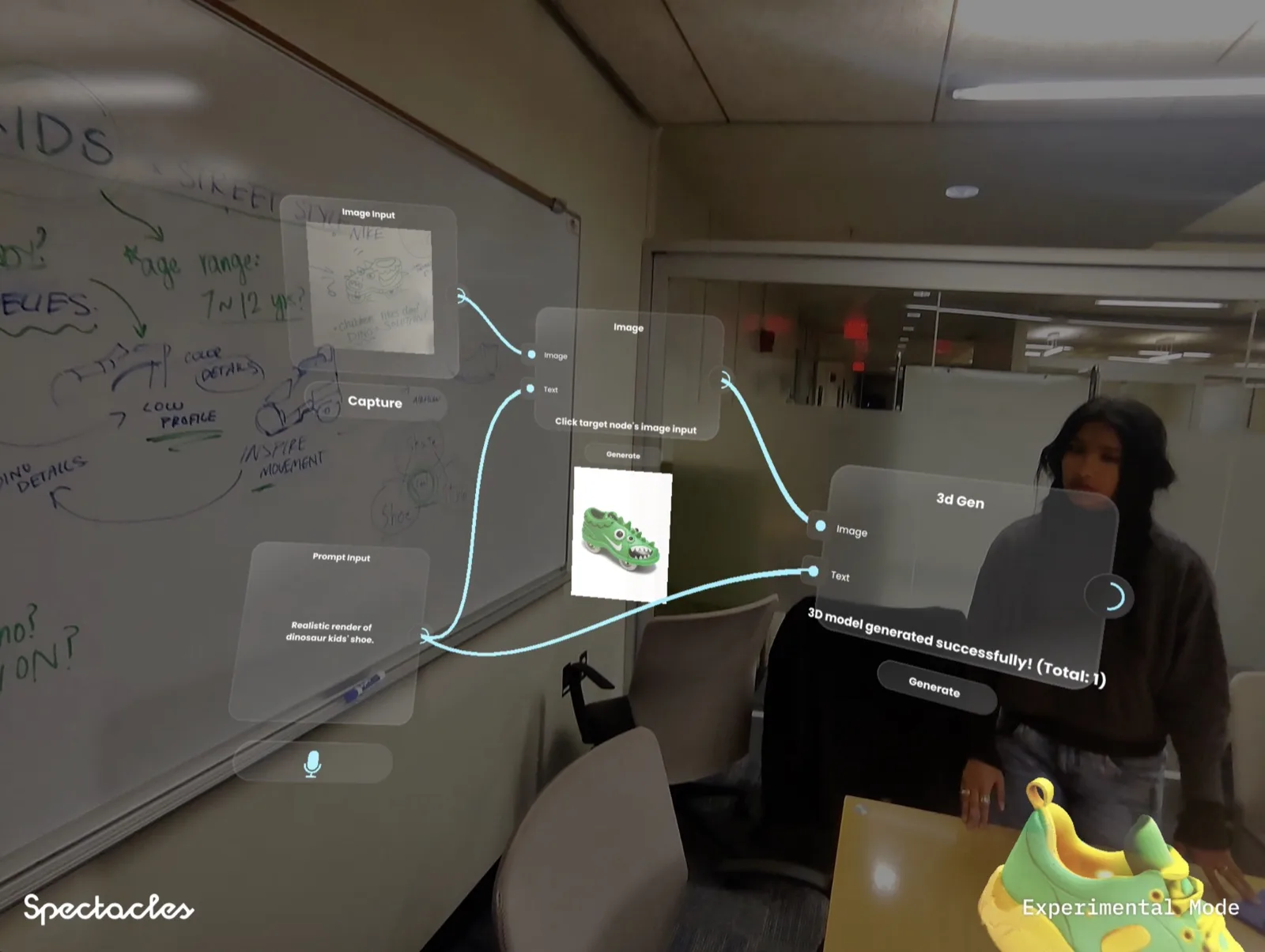

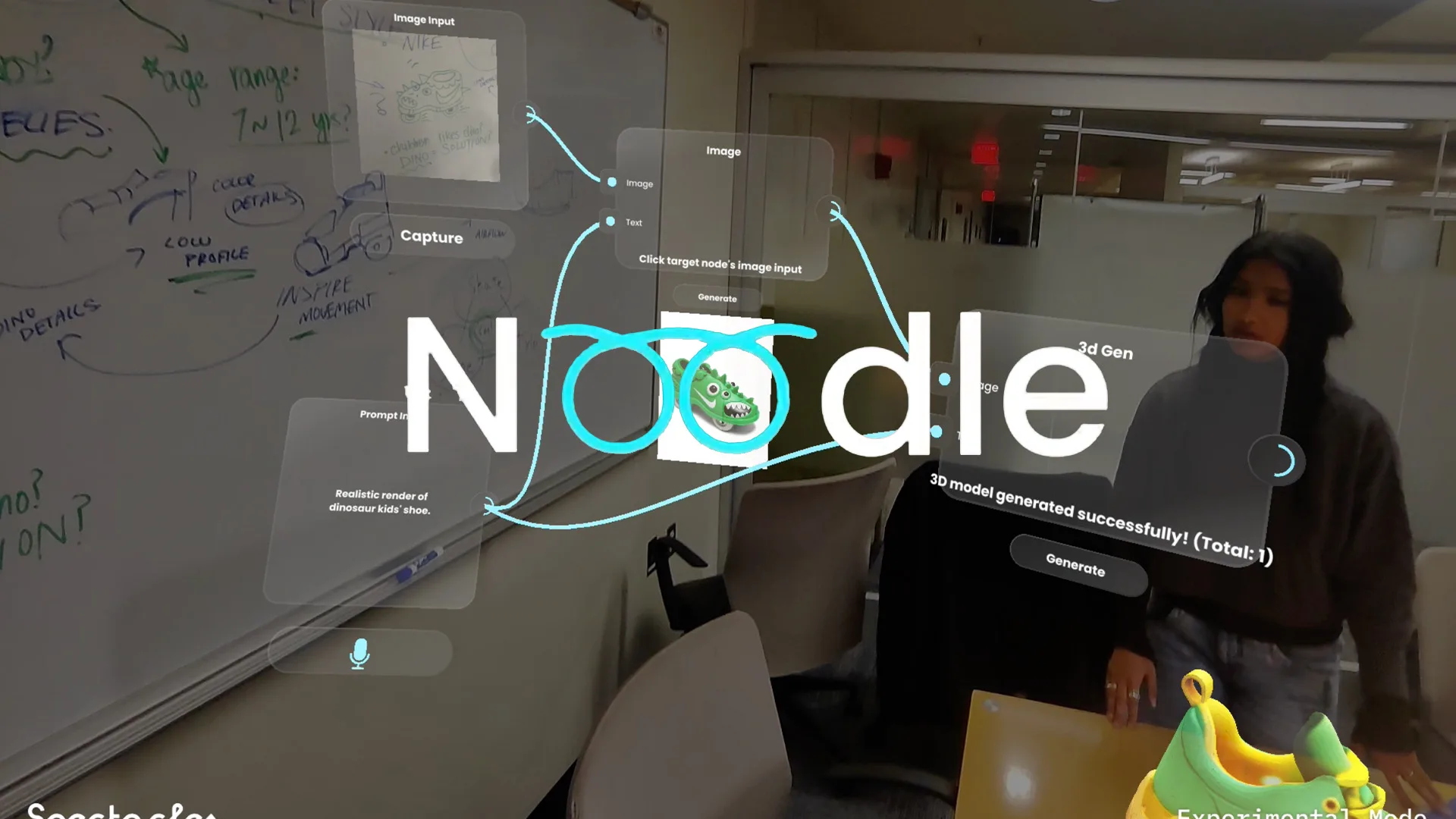

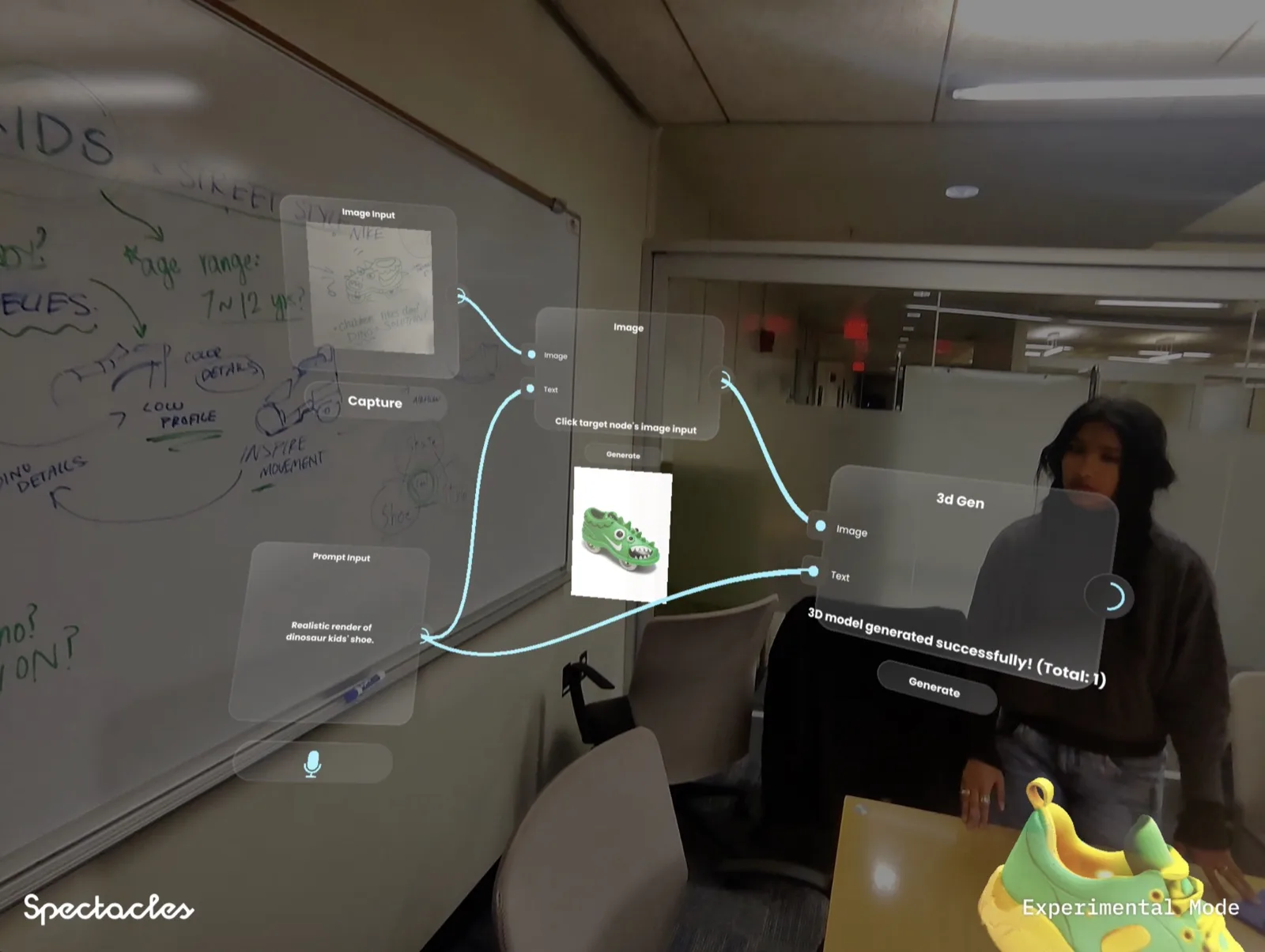

Spatial nodes,

real desk, AI output.

MIT Reality Hack 2026

MIT Reality Hack 2026

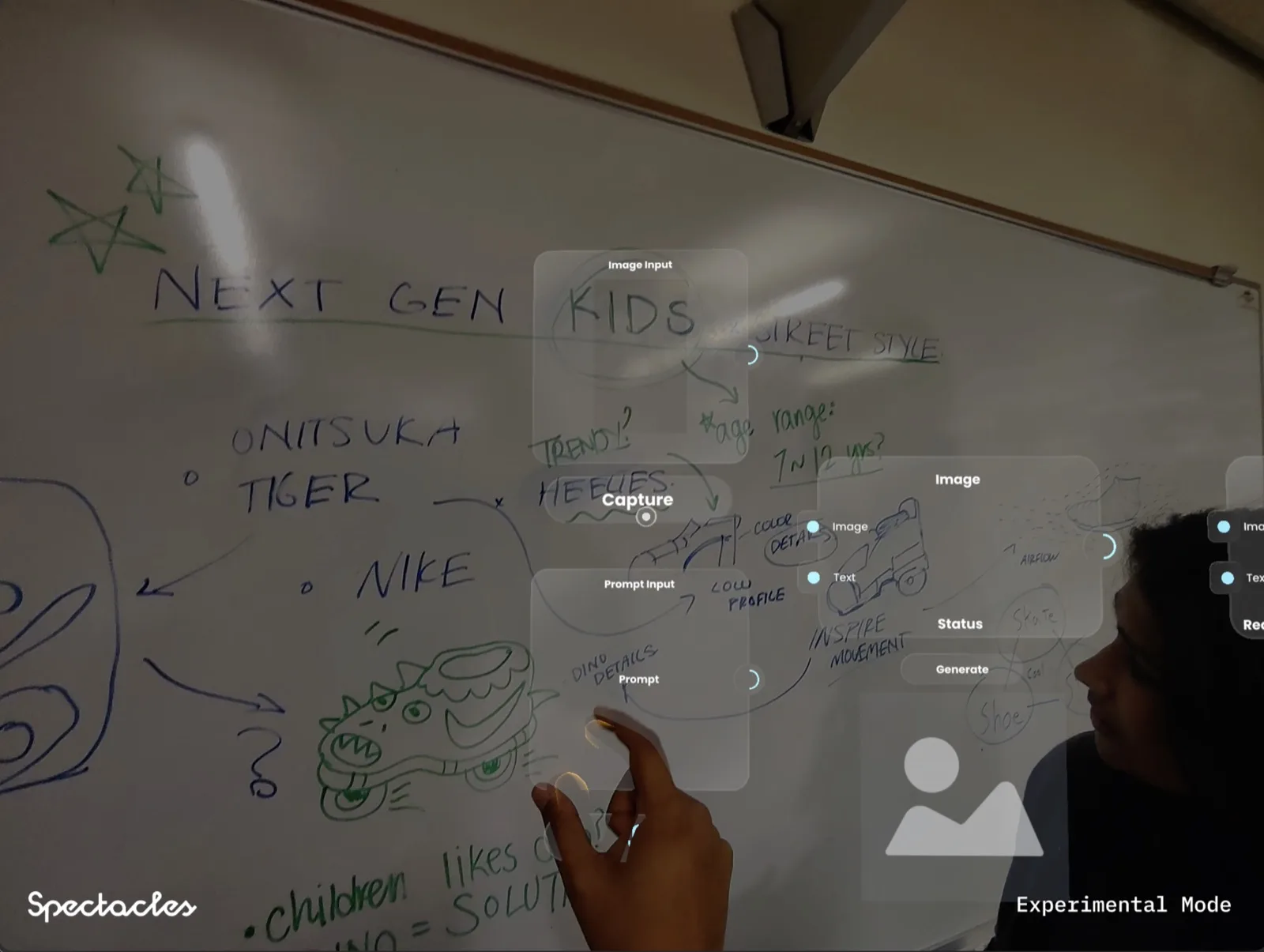

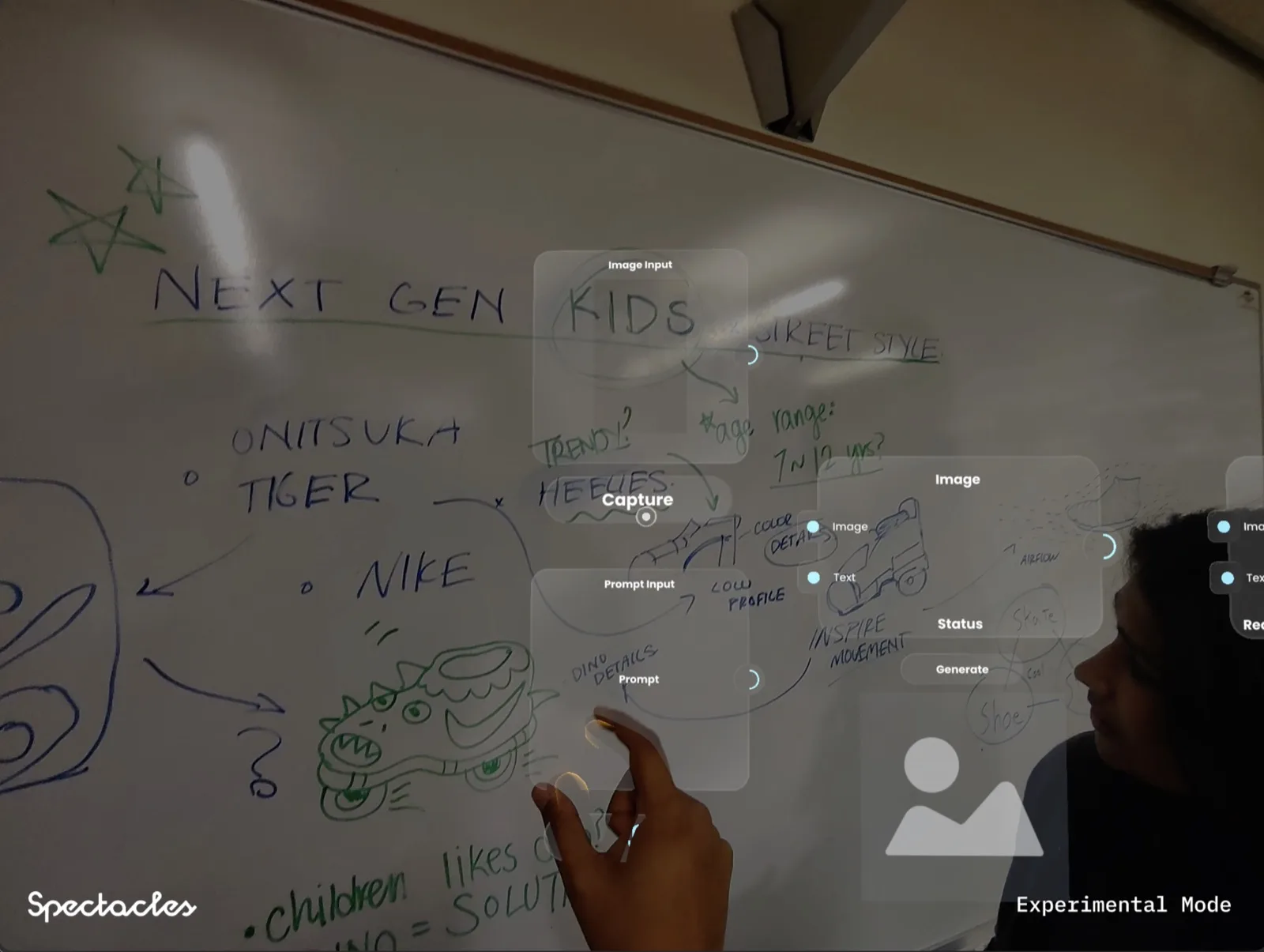

MIT Reality Hack 2026 open brief: build something meaningful for spatial computing in 36 hours. The team chose to solve a real problem for creative professionals. The constraint was the hardware: Snap Spectacles Gen 5, hand tracking only, no keyboard, no mouse.

Instead of building another AR productivity tool that digitised an existing desktop workflow, we asked what a creative workflow looks like when it starts in physical space. The answer was a node-based spatial canvas where a sketch on your real desk becomes the first input, your voice becomes the prompt, and a 3D model sitting on that same desk is the output.

No app switching, no file management, no keyboard. The entire idea-to-object pipeline in one continuous spatial flow.

MIT Reality Hack 2026

MIT Reality Hack 2026

We work with brands and platforms at the edge of spatial computing. Tell us what you're building.